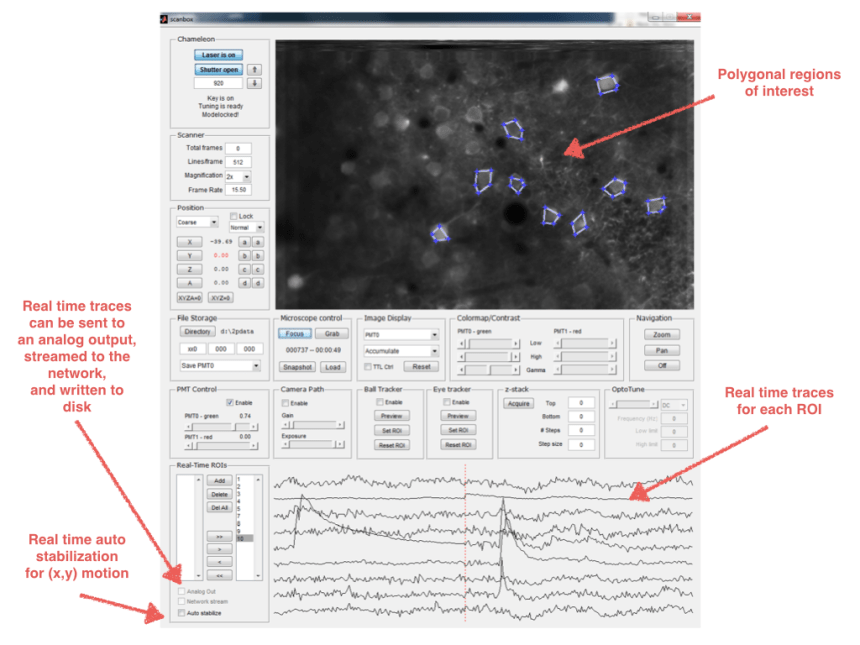

Here is a sneak preview of the new features to be released with Scanbox 2.0.

Some of the salient additions include:

Automatic stabilization: The system can automatically correct for rigid (x,y) translation in real time.

Selection of regions of interest (ROIs): Allows for the selection of regions of interest that need to be tracked in real-time. After their definition, the polygons can be edited by translating them or individually moving their vertices. The ROIs calculation can be used in conjunction with automatic stabilization.

Real-time calculation of ROI traces: Scanbox computes the mean image (other statistics also possible) in each ROI and displays its z-scored version it in real time on a rolling graph, where the vertical, dashed red line displays the present time.

Stimulus onset/offset marking: The beginning and end of stimuli are displayed on the trace graph by a background of a light blue color, allowing one to easily verify the cells are responding to a stimulus.

On-line processing: The ROI trace data can be mapped into analog output channels, streamed over the network, or streamed to the disk for further on-line processing. So be ready to compute your tuning curves on the fly!

Here is a snapshot of how the GUI is looking at the moment:

Here is a movie without actual cells, but showing the ability to mark the location of the stimuli and the analog output. The signal is generated by waving my finger near the front of the objective.

If you need any other features, please let your suggestion below (no, the system will not generate the figures and write the paper.)

Looks great! Another simple suggestion: Add a counter to the arrows that control the polarizer for laser power, so that users can approximately track power adjustments.

Easy enough….

Will we be able to save each ROI’s location as well so that later we can map each saved trace to the region it was averaged over?

Yes

This looks fantastic. How did you get this done in real-time using matlab without messing up high speed acquisition? I tried so many things with scanimage and in the end resorted to timer functions. But still: the matlab single threadedness always got me and ROI data grabbing and computation always interfered with the data acquisition, rendering it pretty much useless for fancy things like closed loop (BMI) experiments. I thought that the ‘commercial’ scanimage 5 is moving to FPGAs for precisely that reason. You seem to have managed without fancy new hardware (including AO… very nice!).

Glad you like it… (And we are not done yet! More soon!)

Scanbox is part hardware part software. We off-load the generation of the signals that drive the microscope to a custom-designed card (https://scanbox.wordpress.com/2014/03/13/the-heart-of-scanbox/), While Matlab focuses on data acquisition and processing.

yes, of course – but it’s precisely the acquisition and custom processing interaction that does not work so well with the standard scanimage 4 + TL resonant scanner package. Are you outsourcing the ROI processing / frame registration to external compiled (GPU?) code or do you stay within a single matlab instance? I would assume that accessing a matlab array for analysis that also acts as acq. image frame buffer would be dangerous also in your case…

I have no experience with Scanimage. Scanbox is all Matlab, but it does use the GPU from within the parallel processing toolbox. (No compiled code).

thanks – I’ll try to copy the buffered frames to GPU and see if this takes some load off the system.

@Tobias: Have you tried compiled C/MEX files for the computational expensive processing? I was modifying Scanimage 4 in order to get it running without Thorlabs components, and by using MEX files written in parallelized code, I could speed up some critical parts (binning, un-interleaving the traces, flipping every second line) to a negligeable amount of 1-3 ms per 512x512x2 frame. But it depends on what you want to do – can you tell? If you want to use Matlab-intrinsic fancy stuff like regionprops(), you can use them in MEX files, but it’s not possible to parallelize these parts. And I haven’t used the original Scanimage 4 with Thorlabs, so I don’t know how much free time the processor has in between frames …

As far as I understand it, ScanImage5 is moving to FPGAs for future online analysis, but it will not be implemented in ScanImage5 yet. Dario’s system seems to be more advanced to me.

No I did not try that. Interesting to hear what you did with SI, though (sorry, Dario for discussing this here – I will stop that now). I will PM you, if you don’t mind. The idea would have been to do simple extraction of average intensities in a few simple ROIs for immediate closed loop feedback and general live monitoring of activity. Same as Dario did here just without live registration. Nothing fancy. But also nothing top priority for me. I may pick it up at some later point. Please say hi to Rainer from me!

Tobias — real-time ROI stabilization: https://scanbox.wordpress.com/2014/10/31/real-time-motion-compensation-in-scanbox/

Dear Peter and Tobias,

This is a commercial response.

You may want to have a look at the latest version of the Sutter Instrument MCS software suite.

Some useful information on the latest version, MCS 2.1 can be found at:

http://suttermomblog.blogspot.com

The two-photon imaging program MScan 2.1 does pretty much what you want using commoditized acquisition hardware either for conventional or resonant scanning. No FPGAs, no customized board.

Thanks to extensive multithreading MScan 2.1 has been optimized to run scripts for close-loop experiments (BMI) in resonant scanning with minimal latency with access to ROIs previously drawn on screen and digital/analog train generation.

Hope you’ll find this interesting. By the way (this is for Tobias), you may want to discuss with Rick at Sutter if you want to upgrade your Thorlabs hardware with Sutter software.

Best regards,

Quoc

We are enjoying the real-time tracking feature. In version 2, it looks like the ROI coordinates are being marked and saved in a variable called roipix. However, the format of the pixel location is a column of double integers. How can we decode the location of the of the ROI using this data?

Hi Sandy,

The variable roipix is an index to an image of the same size as the one captured.

So, for example, if your image is 512 x 796, you can see the mask corresponding to the first ROI by doing:

z = zeros(512,796);

z(roipix{1}) = 1;

imagesc(z); truesize

Hello,

Is the ttl_log in the realtime data currently functional? I am assuming that is the variable that reads the TTL pulses to mark whether the stimulus was present in the frame. But it seems like the variable is a just a column of zeros in the current form, even when the TTL pulses are being recognized and saved in the main info results structure.

Thanks,

Brian

Yes, it works. For this to become functional you need to split the TTL signal that is HIGH during the stimulus and LOW otherwise, and wire it to the AUX input of the AlazarTech card. It is the 2nd connector from the bottom (the side farther away from the LED).

Once you connect this input you should see both the stimulus markers on top of the real time traces as well as the the data appearing in the ttl_log variable.